In the Pentagon Battle with Anthropic, We All Lose

The Pentagon and Anthropic have seen their relationship blow up in an argument over how the military could use Anthropic’s AI models. On the surface, this is a fight about defense contracts. Really, it is something much deeper: a stress test of how the United States governs frontier AI.

Anthropic is being eased out of Department of Defense contracting for classified networks, where it had been the dominant supplier of some kinds of AI services, and OpenAI is being eased in. This comes right after Anthropic services have reportedly played an important role in the recent military actions in both Venezuela and Iran. So the stakes are high.

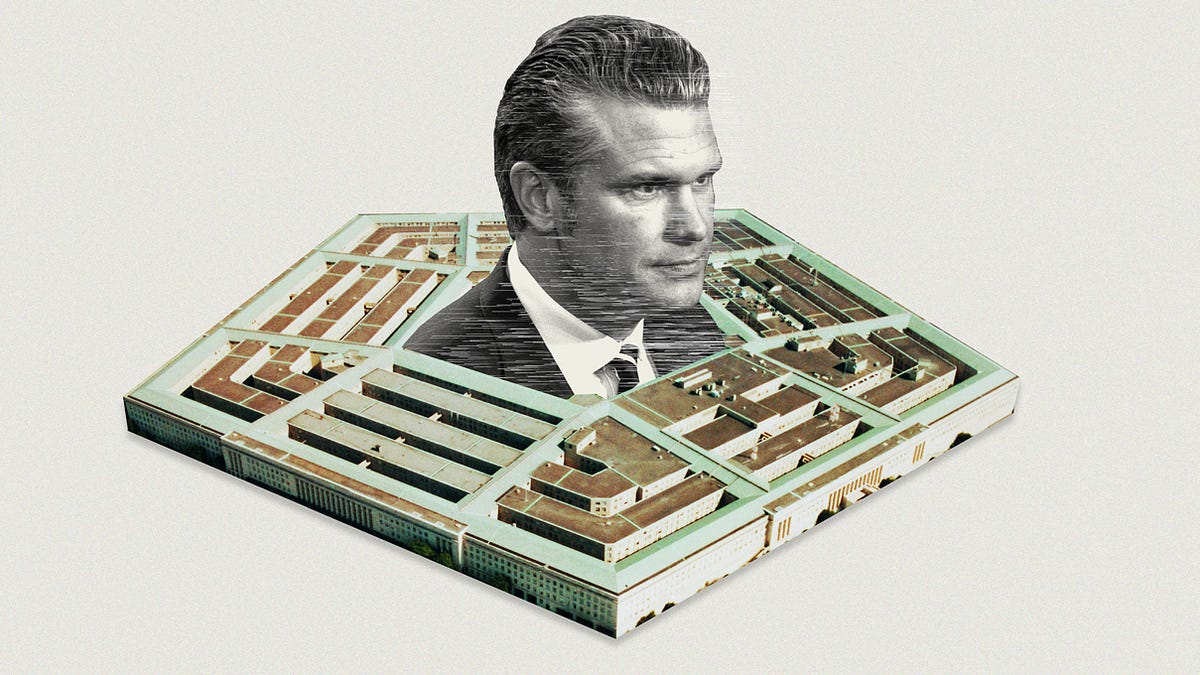

The fight between Secretary of Defense Pete Hegseth and Anthropic centers on how much Anthropic could restrict the military uses of its AI. The company wanted to limit the participation of its models in domestic surveillance and fully autonomous weaponry, i.e., instruments of killing where humans are completely removed from the loop. The DoD, in contrast, thinks that current legal restrictions on those activities suffice, and argued that Anthropic was seeking the right to impose unilateral restrictions on the military.

We are just not ready to build a very formal system of regulation for a technology that is changing so rapidly.

That fight appeared to come to a head on Friday, when Hegseth barred Anthropic from Pentagon contracts. In fact, that was actually a step back from the brink of what might have been a death sentence for Anthropic. Hegseth had previously threatened to label Anthropic a “supply chain risk,” the same kind of designation that applies to Chinese tech and telecom giant Huawei for its close links to China’s government. That escalation, at least according to the Department of Defense, would have meant that companies could deal with the Pentagon or Anthropic, but not both. That might have forced the companies that already have close relationships with Anthropic, like Amazon, to stop doing business with them, effectively closing down key parts of its business (though whether that is the actual upshot is another matter of dispute).

The lack of an agreement on those and other issues has led to an uncomfortable divorce, albeit with a transition window of six months to remove Anthropic’s AI from classified systems. The details of the new deal with OpenAI are still being worked out, but presumably they will be more to the satisfaction of the Pentagon. OpenAI CEO Sam Altman urged that the terms they settle on should be a template for AI in defense that should be offered to all AI companies, and which “everyone should be willing to accept.”

I am on the advisory board of Anthropic, and have had to think broadly about how the U.S. should approach the key emerging technology of our time. I’d like to move away from the “he said, she said” takes on what happened, and consider the bigger stakes in this fight over AI regulation. The basic problem is that our current methods of regulating advanced AI models—a function we’ve handed over largely to our national security establishment—are collapsing, and we do not have anything good to replace them with.

For Anthropic, the essential issues—the risks of a rogue AI making life and death decisions, the ways AI could be used in unprecedented programs of mass surveillance—are central to how we approach a powerful and unpredictable technology. For the Pentagon, these are already covered by existing laws, and not a subject for negotiation between the government and a company that seeks to define on its own what counts as a “safe” use of AI. It is, in other words, a conflict between visions of who decides how AI should be used.

Congress has not passed explicit regulation of AI foundation models, and an executive order from President Trump limited regulation at the state level. But do not think that laissez-faire reigns. In addition to existing (largely pre-AI) laws, which lay out general principles of liability, and laws from a few states, the United States is engaged in a kind of “off the books” soft regulation.

The major AI companies keep the national security establishment apprised of the progress they are making, as has been the case with Anthropic. There is a general sense within the AI industry that if the national security authorities saw anything in the new products that was very concerning or that might undermine the national interest, they would inform the president and Congress. That would likely lead to more formal and more restrictive kinds of regulation, so the major AI companies want to show relatively safe demos and products. An informal back and forth enforces implied safety standards, without the involvement of formal legislation.

The current AI guardrails rely on the threat of regulation, rather than regulation itself, with the national security state as the watchdog.

That may sound like an unusual way to do regulation, but to date the system has worked relatively well. For one thing, I believe our national security establishment has a better and more sophisticated understanding of the issues than does Congress. Congress right now simply isn’t up to the job, as indeed the institution has been failing more generally. Most representatives seem to know little about the core issues behind AI regulation.

As it stands, AI progress has been allowed to proceed, and the United States has stayed ahead of China, without major catastrophes. The burden on the companies has been manageable, and the system, at least until last week, was flexible.

Another advantage of this system is that both Congress and the administrative state can be very slow to act. The AI landscape can change in just weeks, yet our federal government is used to taking years to issue laws and directives. Had we passed AI legislation in, say, 2024, today it would be badly out of date, no matter what your point of view on what such regulation should accomplish. For instance, in 2024 few outsiders were much concerned with the properties of, or risks from, autonomous AI “agents.” Today that is the number-one topic of concern.

Though it is not driven by legislation, the status quo AI regulatory system is not anti-democratic, as it operates well within the rules passed by Congress and the administrative state. It is more correct to say the current AI guardrails rely on the threat of regulation, rather than regulation itself, with the national security state as the watchdog. The system sticks to a kind of creative ambiguity. The national security state offers no official imprimatur for the new advances, but they proceed nonetheless. Nevertheless, the various components of the national security state reserve the right to object in the future.

It is also correct, however, to believe that such a system cannot last forever. At some point creative ambiguity collapses. Someone or some institution demands a more formal answer as to what is allowed or what is not allowed. At that point a more directly legalistic system of adjudication enters the picture, and Congress likely starts paying more attention.

With the recent dispute between Hegseth and Anthropic, we have taken a step away from the previous regulatory mode of quiet cooperation. Instead, the relationship between the military and the AI companies has become a matter of public concern. Now everyone has an opinion on Hegseth, Anthropic, and OpenAI, and social media is full of debate.

Such a debate can be healthy, and perhaps it will prove to be a step in bringing Congress up to speed on the issues. The risk in the meantime, though, is that the terms of AI model usage are being politicized. You can think public debate is great, as I do, without much trusting Congress to do the right thing. Imagine that Congress passed a bill outlining when humans need to be in the “kill loop” for military systems, or what kind of foundation models the military should be working with in the first place. Would that legislation still be timely a few years down the road? Probably not, and it might be obsolete before the legislation was signed. We are just not ready to build a very formal system of regulation for a technology that is changing so rapidly. So whatever you think of the details of the recent incident, its main import is how it will change AI regulation looking forward.

I am not sure Anthropic had realistic expectations about what concessions it might win from the U.S. military. But for me, the main villain in the story is Pete Hegseth. By attacking Anthropic, painting it as a “woke” AI, and threatening to cut off its normal ability to do business, he turned a solvable contract dispute into an ugly public battle of wills. The United States government, when it has a disagreement with a company, should not respond by essentially trying to blacklist the firm. That politicizes our entire economy, and over the longer run it is not going to encourage investment in the all-important AI sector.

In the longer run, the legacy of Hegseth here will be to diminish the say of the military over AI developments, and increase the role of Congress, a possibly Democratic Congress at that.

Exactly who is it that should be happy here?