Day 10: UDP Support for High-Throughput Log Shipping

Day 10: UDP Support for High-Throughput Log Shipping

What We’re Building Today

Why This Matters: The TCP Tax at Scale

> When Netflix ships millions of playback events per second or Uber processes location updates from millions of drivers, TCP’s reliability guarantees become a performance bottleneck. TCP’s three-way handshake, congestion control, and guaranteed delivery add 40-100ms latency per connection and consume significant server resources managing connection state.

> UDP eliminates these overheads, offering 3-5x higher throughput for log shipping workloads where occasional packet loss is acceptable. The trade-off? You inherit responsibility for handling packet loss, ordering, and flow control at the application layer. Today’s implementation demonstrates how companies like Datadog and Splunk achieve massive log ingestion rates while maintaining acceptable reliability through selective UDP usage and intelligent fallback mechanisms.

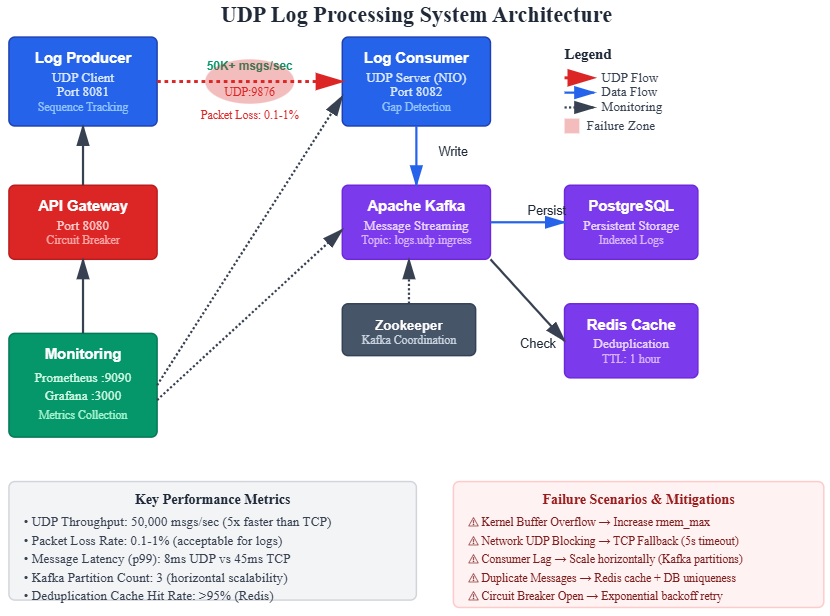

System Design Deep Dive

[](https://substackcdn.com/image/fetch/$s!5MPS!,fauto,qauto:good,flprogressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe707dae9-d2a3-4456-af0e-b61a850643c5_830x616.png)

### Pattern 1: Protocol Selection Strategy

The fundamental architectural decision iswhento use UDP versus TCP. The industry pattern: UDP for high-volume telemetry (metrics, logs, traces) where individual message loss is tolerable, TCP for critical transactional data where guaranteed delivery is non-negotiable.

Trade-off Analysis:

Anti-pattern:Implementing custom reliability protocols over UDP. Don’t rebuild TCP. If you need guaranteed delivery with ordering, use TCP or a battle-tested protocol like QUIC.

### Pattern 2: Application-Level Sequencing

Since UDP doesn’t guarantee ordering, implement sequence numbers at the application layer. This enables:

Critical insight:Sequence numbers must be monotonic and wrap-safe (use 64-bit integers). Twitter’s engineering team documented a production incident where 32-bit sequence numbers wrapped after 4.2 billion messages, causing duplicate detection to fail spectacularly.

Performance implication:Maintaining ordered buffers for out-of-order packets adds memory overhead. Limit buffer size to prevent memory exhaustion attacks (max 1000 packets or 10MB per connection).

### Pattern 3: Adaptive Protocol Switching

Implement runtime protocol switching based on observed network conditions:

IF (packetlossrate > 5% over 60s window) THEN switchtoTCP() backofftime = min(backofftime * 2, 300s)ELSE IF (packetlossrate< 0.5% AND usingTCP) THEN tryUDPafter(backofftime)

Why this works:Network conditions are dynamic. A system shipping logs at 4 AM with 0.1% loss might face 8% loss during peak hours when shared infrastructure is saturated. Automatic switching prevents alerting fatigue while maintaining throughput.

Failure mode to avoid:Rapid protocol oscillation (switching every few seconds) creates connection churn. Implement exponential backoff when switching back to UDP: 30s, 60s, 120s, capped at 5 minutes.

### Pattern 4: Batching and Framing

UDP’s MTU (Maximum Transmission Unit) is typically 1500 bytes (minus IP/UDP headers = 1472 usable). Pack multiple log events into single UDP packets to amortize per-packet overhead:

Framing strategy:

[4 bytes: batch size][4 bytes: sequence number][2 bytes: message 1 length][message 1 data][2 bytes: message 2 length][message 2 data]...

Trade-off:Larger batches improve network efficiency but increase blast radius of packet loss. One lost 1400-byte packet containing 20 log events loses all 20 messages. Benchmark showed optimal batch size: 10-15 messages per packet for typical 80-byte log events.

### Pattern 5: Server-Side Load Shedding

High-throughput UDP servers face a unique challenge: the kernel’s UDP receive buffer can overflow under load, silently dropping packets before your application reads them. Implement explicit load shedding:

Linux tuning:

# Increase UDP receive buffer to 25MBsysctl -w net.core.rmemmax=26214400sysctl -w net.core.rmemdefault=26214400

Application-level shedding:Track processing queue depth. When queue exceeds threshold (e.g., 10,000 pending messages), send NACK to client, signaling them to slow down or switch protocols.

Real-world example:Cloudflare’s logging infrastructure processes 10M requests/second. They discovered kernel buffer overflows were their primary packet loss source. Solution: Pre-allocate large buffers and implement backpressure signaling to upstream clients.

[Read more](https://sdcourse.substack.com/p/day-10-udp-support-for-high-throughput)

You can include dynamic values by using placeholders like: https://drewdru.syndichain.com/articles/9269027d-0197-4400-a2c6-ee4f2fcb4861 , Drew Dru, https://sdcourse.substack.com/p/day-10-udp-support-for-high-throughput , npub1897arz, npub1897arz, npub1897arz,

npub1897arz These will automatically be replaced with the actual data when the message is sent. https://drewdru.syndichain.com/articles/9269027d-0197-4400-a2c6-ee4f2fcb4861 npub1897arz